Google Warns AI-Powered Hacking Has Become Industrial-Scale Threat

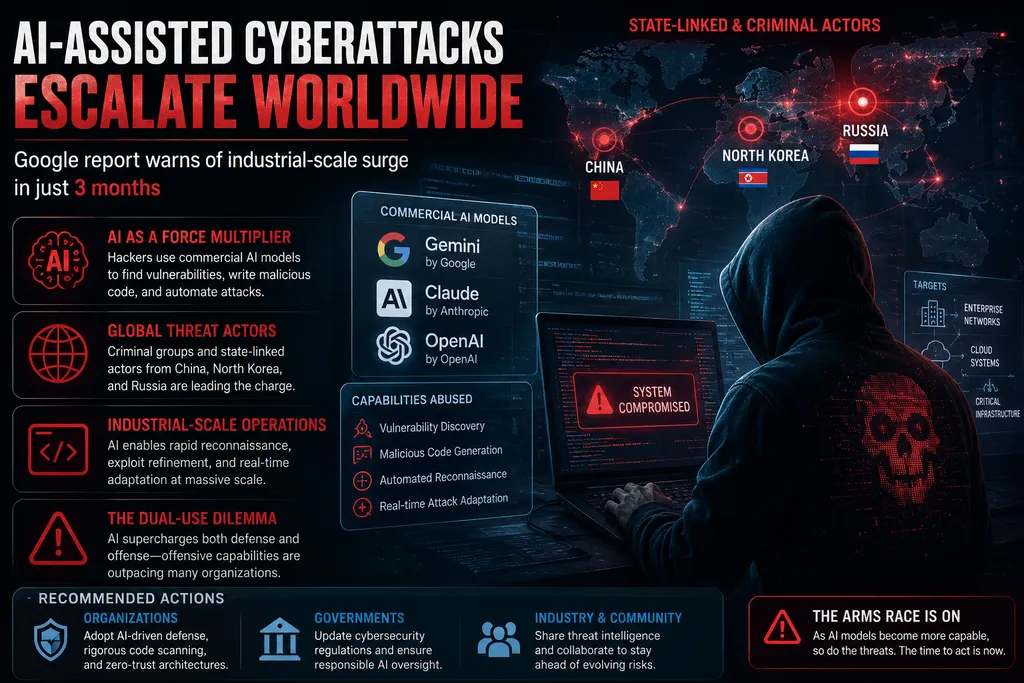

In a stark new report, Google’s threat intelligence team has revealed that AI-assisted cyberattacks have escalated dramatically, transforming from experimental tools into a full-blown industrial operation in just three months.

Criminal groups and state-linked actors from China, North Korea, and Russia are now routinely leveraging commercial AI models—including Google’s Gemini, Anthropic’s Claude, and OpenAI’s offerings—to discover vulnerabilities, generate malicious code, and scale attacks across software systems worldwide.

The findings highlight AI’s dual-use nature: while large language models excel at coding and problem-solving, they are proving equally adept at exploiting weaknesses in everything from enterprise networks to critical infrastructure. Hackers use these tools to refine exploits, automate reconnaissance, and even adapt attacks in real time, dramatically lowering the barrier for sophisticated operations.

“AI has supercharged both defense and offense,” a Google source noted, “but the offensive capabilities are evolving faster than many organizations can respond.”

The report comes amid broader concerns over AI security. Earlier this month, similar warnings emerged about AI being used to develop major security flaws, prompting calls for stricter controls on model access and output filtering.

Industry experts urge immediate action. Companies are advised to accelerate AI-driven defensive tools, implement rigorous code scanning, and adopt zero-trust architectures. Governments are under pressure to update cybersecurity regulations to address AI-specific risks.

This development underscores a growing tension in the AI era: rapid innovation brings immense benefits but also amplifies threats. As models grow more capable, the cybersecurity arms race is intensifying.

Comments

No comments yet. Be the first to comment!

Leave a Comment