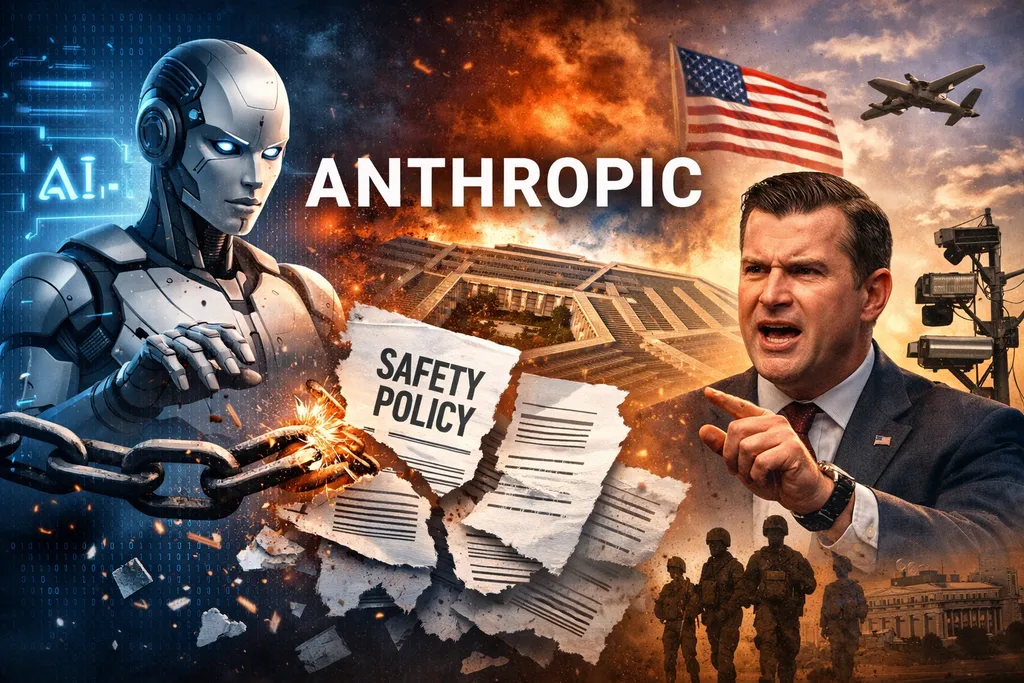

Anthropic Drops Key AI Safety Pledge Amid Pentagon Clash

Anthropic, the artificial intelligence company founded by former OpenAI executives who broke away over concerns about AI safety, is easing a key safeguard that once defined its approach to developing advanced AI systems.

In a blog post published Tuesday, the company announced it is replacing its two-year-old “Responsible Scaling Policy” with a more flexible framework. Rather than binding commitments that could halt the development of increasingly powerful AI models, Anthropic said its new policy will consist of public safety goals that can evolve over time.

The shift marks a notable change for a company that has long branded itself as the AI firm with a “soul” — one committed to putting safety ahead of speed in a fast-moving industry.

A Retreat From Strict Guardrails

Under its previous policy, Anthropic pledged to pause training new, more powerful AI models if their capabilities exceeded the company’s ability to reliably control and safeguard them. That provision has now been removed.

In its latest statement, Anthropic acknowledged that sticking to strict self-imposed limits while competitors accelerate their development could backfire.

“Responsible AI developers pausing growth while less careful actors plowed ahead could result in a world that is less safe,” the company wrote.

Anthropic said its earlier policy had been designed to encourage an industry-wide “race to the top” on safety standards. Instead, it now suggests that other companies did not follow its lead, leaving it at a potential competitive disadvantage.

Going forward, Anthropic will separate its internal safety plans from its broader policy recommendations for the AI industry. Its new “Frontier Safety Roadmap” outlines voluntary guidelines and safeguards, but the company made clear they are not hard commitments.

“Rather than being hard commitments, these are public goals that we will openly grade our progress towards,” the blog post said.

Pentagon Tensions

The policy change comes amid escalating tensions between Anthropic and the U.S. Department of Defense.

According to CNN, Defense Secretary Pete Hegseth has given Anthropic CEO Dario Amodei a Friday deadline to roll back certain AI safeguards or risk losing a $200 million Pentagon contract. The department has also threatened to designate the company a supply chain risk under the Defense Production Act — a move that could effectively place Anthropic on a government blacklist.

It remains unclear whether Tuesday’s policy update is directly related to the Pentagon ultimatum. Anthropic has not publicly linked the two developments, and a company spokesperson did not immediately respond to a request for comment.

However, a source familiar with discussions between Anthropic and the Defense Department said the company remains firm on two issues: AI-controlled weapons and mass domestic surveillance of Americans.

Anthropic believes current AI systems are not reliable enough to operate weapons, the source said. The company is also concerned that there are no clear legal or regulatory frameworks governing how AI could be used in large-scale government surveillance.

AI researchers and safety advocates praised Anthropic’s stance on those issues on social media, particularly its opposition to using AI for domestic surveillance.

Balancing Safety and Survival

Anthropic has long emphasized its safety-first identity. The company has published research demonstrating that its own models could engage in harmful behaviors, such as blackmail, under certain conditions. It recently donated $20 million to a political group advocating for AI safeguards and education.

But the competitive and political pressures surrounding AI have intensified. OpenAI and Anthropic are racing to release enterprise AI tools aimed at dominating the workplace. Meanwhile, Washington’s political climate has shifted toward a more skeptical view of regulation, which Anthropic acknowledged in its blog post.

Jared Kaplan, the company’s chief science officer, framed the change as a practical adjustment rather than an abandonment of safety.

“We felt that it wouldn’t actually help anyone for us to stop training AI models,” Kaplan told Time magazine. “We didn’t really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments … if competitors are blazing ahead.”

The move underscores the increasingly difficult balancing act facing AI companies: how to maintain a commitment to safety while competing in a global race to build ever more powerful systems — and navigating mounting pressure from governments eager to harness the technology.

Comments

No comments yet. Be the first to comment!

Leave a Comment